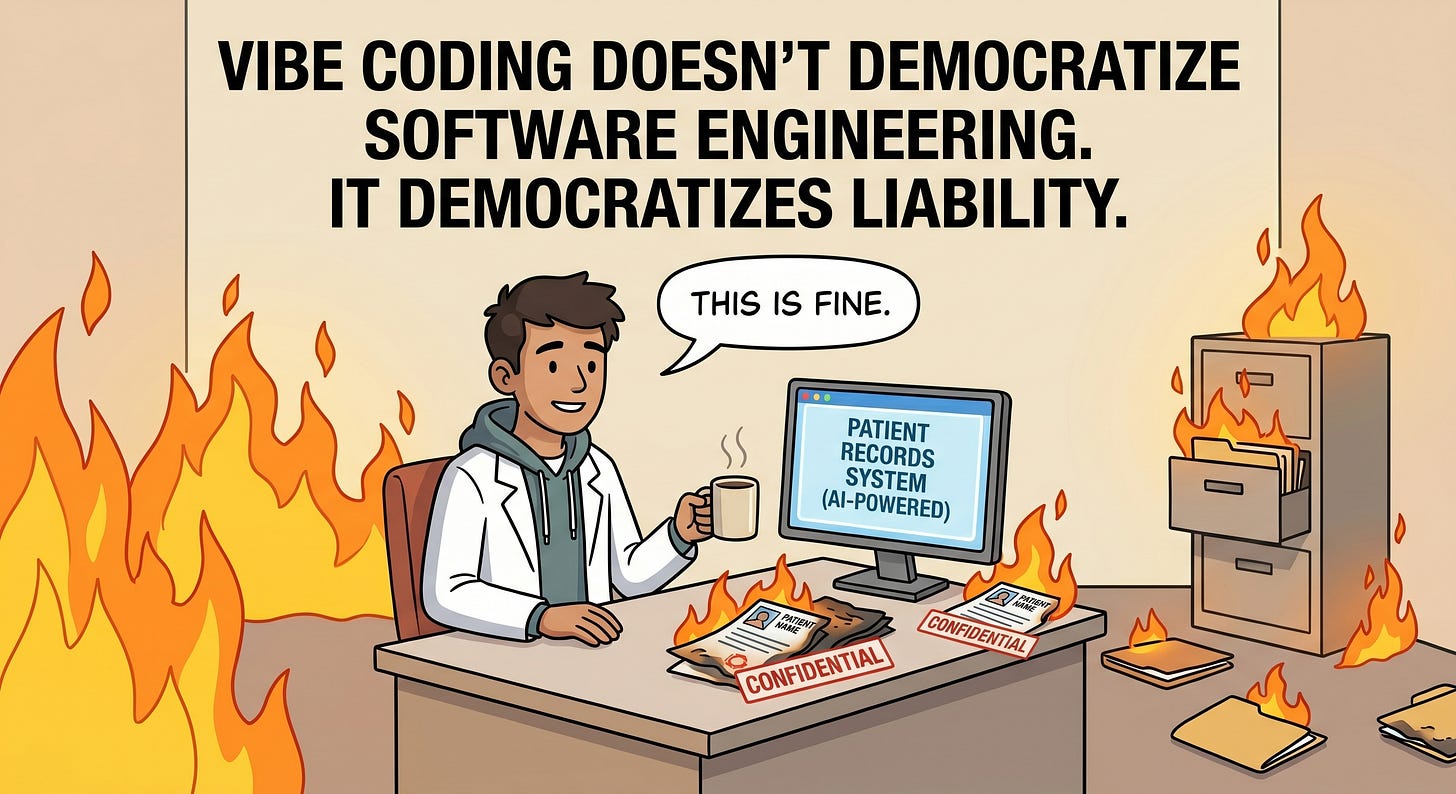

Vibe Coding doesn't democratize software engineering - it democratizes liability

This is one of the truest statements I heard in a long while.

A few weeks ago, a Swiss developer named Tobias Brunner published a story that should make every healthcare professional pause. Someone watched a video about how easy it is to build software with AI, fired up a coding agent, and built their own patient management system. They imported all their patient data, published it to the open internet, and added a feature to send recorded appointment audio to two AI services for automatic summaries.

Thirty minutes of poking around and Tobias had full read and write access to every patient record. The entire application was a single HTML file. The “backend” was a managed database with zero access control. All security logic lived in client-side JavaScript - meaning the data was literally one curl command away from anyone who looked. Voice recordings of medical appointments were streaming to US-based AI APIs with no Data Processing Agreement, no patient consent, no encryption.

The person’s response to the disclosure? A 100% AI-generated reply thanking Tobias warmly and assuring him they’d taken “immediate action.”

“They had no idea what they’d built.” - And that is the most scary part.

This Is the Default Outcome

This isn’t an edge case. This is what happens when you hand powerful tools to people without the knowledge to evaluate what those tools produce. The AI didn’t fail. It did exactly what it was asked to do. Nobody asked it to implement tenant isolation, encrypt data at rest, redact PHI from logs, validate consent before recording, or scope database access. So it didn’t.

The promise of “anyone can build software now” skips a critical detail: building software that works is not the same as building software that is safe. In healthcare especially, the gap between “it runs” and “it’s compliant” is where lawsuits, regulatory fines, and patient harm live.

So again: Vibe coding doesn’t democratize software engineering. It democratizes liability.

Don’t Get Me Wrong

I love the idea of doctors building their own tools. Doctors, clinic managers, practice administrators: these are the subject matter experts. They know what a good intake form looks like. They know which workflows waste hours every week. They know what their patients actually need. No product manager sitting in a San Francisco office will ever understand a specialty practice the way the people running it do.

That’s exactly why this matters. The problem isn’t that non-engineers are building software. The problem is that the tools they’re using offer no safety net. The creativity and domain expertise of a physician building their own scheduling tool or triage workflow is incredibly valuable. But right now, the tools just let you vibe your way into a HIPAA violation without even knowing it happened.

The answer isn’t to tell doctors to stop building. It’s to give them a platform where the dangerous parts are already handled.

What We’re Building Differently at Cara

At Cara, we’re building an AI-powered healthcare application builder. Doctors and clinic staff describe what they need, and Cara generates production-ready applications with EHR integrations and one-click deployment. We face the exact same fundamental challenge as the horror story above: AI is generating the code. The difference is in what happens around, before, and after that generation.

We believe the answer isn’t to slow down AI-assisted development. It’s to make security a property of the system, not a property of the developer’s knowledge.

Security by Design, Not by Hope

Here’s a non-exhaustive look at what’s already in place and what we’re actively hardening before we go to production:

Deterministic policy over LLM judgment. The single most important architectural decision we’ve made: in any generated application that involves clinical logic, the AI never chooses actions directly. Every clinical decision flows through a deterministic policy engine that maps evidence, risk estimates, and uncertainty scores to a finite set of allowed actions. The core safety property is explicit in the code: action <- policy(evidence, risk, uncertainty). Never action <- LLM. Red flags and abstention always dominate. The LLM does inference; deterministic code does control.

Three-layer content safety gate. Before any generation begins, user prompts pass through three tiers: deterministic regex rules (instant hard blocks), an LLM classifier for nuanced categorization, and a deterministic policy lookup that maps the classifier’s output to allow/block/nudge. The LLM sits in the middle, sandwiched between deterministic layers on both sides. On any classifier failure, the system fails open but logs the event.

Tenant isolation at the database layer. Every database query runs through a Prisma Client Extension that automatically injects tenant filters. You don’t opt into isolation. You’d have to actively circumvent it. This is the single most critical invariant in the system, and it’s enforced by the ORM, not by developer discipline.

PHI redaction at three layers. Patient data is scrubbed from prompts before they reach any AI model (phi-sanitizer), from application logs (Pino logger with 109+ redacted field patterns), and from error tracking (Sentry beforeSend strips SSN, phone, email, DOB, MRN, names, and IP addresses). You have to get through all three layers to accidentally leak PHI.

SSRF protection on every external connection. Every MCP server that connects to an EHR validates URLs against a private IP blocklist, blocks cloud metadata endpoints (169.254.169.254), prevents path traversal, and truncates responses at 50KB. OAuth token caches are closure-scoped per generation, so there is no cross-tenant token reuse.

Static compliance scanning of generated code. Every generated application is scanned against HIPAA, SOC 2, and HITRUST checklists before delivery. A separate agent-safety scanner checks for unsafe AI patterns: direct LLM-to-clinical assignment, unvalidated structured output, missing emergency referral patterns, dangerouslySetInnerHTML with LLM content. Critical findings block the generation.

Automated security review in every commit. Our development pipeline mandates /review-code and /secure checks on every change before it can be committed. No exceptions. After parallel agent work, the final integrated diff gets reviewed again, because changes that are individually safe can interact in unsafe ways. (We caught an SSRF vulnerability this way.)

The Publish Gate: Compliance Audit Before Every Deploy

Here’s where this gets concrete for the people actually using Cara. Before you can publish any application, the platform runs an automated compliance audit against HIPAA and SOC 2 checklists, with HITRUST checks available as well (not mandatory, but there if you want them). This audit is a hybrid: AI-powered analysis of the generated code combined with deterministic, rule-based verification. It checks for things like unencrypted data flows, missing access controls, PHI exposure in client-side code, unsafe API patterns, and dozens of other compliance signals. You get a clear report. Pass, warn, fail. No ambiguity.

And if you want a human in the loop before you go live, you can request a support engineer to do a final security and reliability review before the publish goes through. Someone who actually reads the code, checks the integration points, and signs off. Not everyone will need this. But for applications that handle sensitive clinical workflows or connect to EHR systems, having that option is the difference between “I think this is safe” and “someone verified that this is safe.”

The goal is simple: you should never be able to accidentally publish something dangerous. The automated audit catches the obvious problems. The optional human review catches the subtle ones. And the underlying architecture makes entire categories of vulnerabilities impossible in the first place.

Our Approach to Compliance

We run regular internal compliance audits against HIPAA, SOC 2, and HITRUST frameworks. Hundreds of checks across the full codebase. We track findings, prioritize remediation, and work through them systematically before going to production.

We’re not going to publish the specifics of what we find (for obvious reasons), but we will say this: the core architecture passes cleanly. Tenant isolation, encryption at rest and in transit, PHI redaction, audit trails, infrastructure hardening, BAA workflows, error handling. The foundation is strong.

Where we find gaps, we fix them. Where we find warnings, we evaluate and prioritize. We have a structured remediation roadmap and we’re working through it sprint by sprint. Transparency about the process matters. Transparency about specific unpatched findings does not.

The Point

The horror story from Switzerland isn’t about AI being dangerous. It’s about the absence of everything that surrounds the code. Access control, encryption, consent workflows, audit logging, tenant isolation, compliance scanning, PHI redaction: none of these are things a vibe-coded app will ever have unless someone who understands them builds the system that provides them.

That’s what Cara is. Not an AI that writes code for you and wishes you luck. A platform where the security architecture is the product, and the AI operates inside guardrails that exist whether the user knows about them or not.

We believe the right response to “anyone can build healthcare software with AI” isn’t to pretend they can’t. It’s to make the safe path the only path.

My name is Nils Widal, Co-Founder and CTO at Cara. I love music and cooking and live in the Mission in San Francisco.

I will share a quirky music piece generated by Suno.com for each now blog post here - please enjoy!